There’s a new large language model (LLM) on the block, and it got me thinking about why I practice meditation.

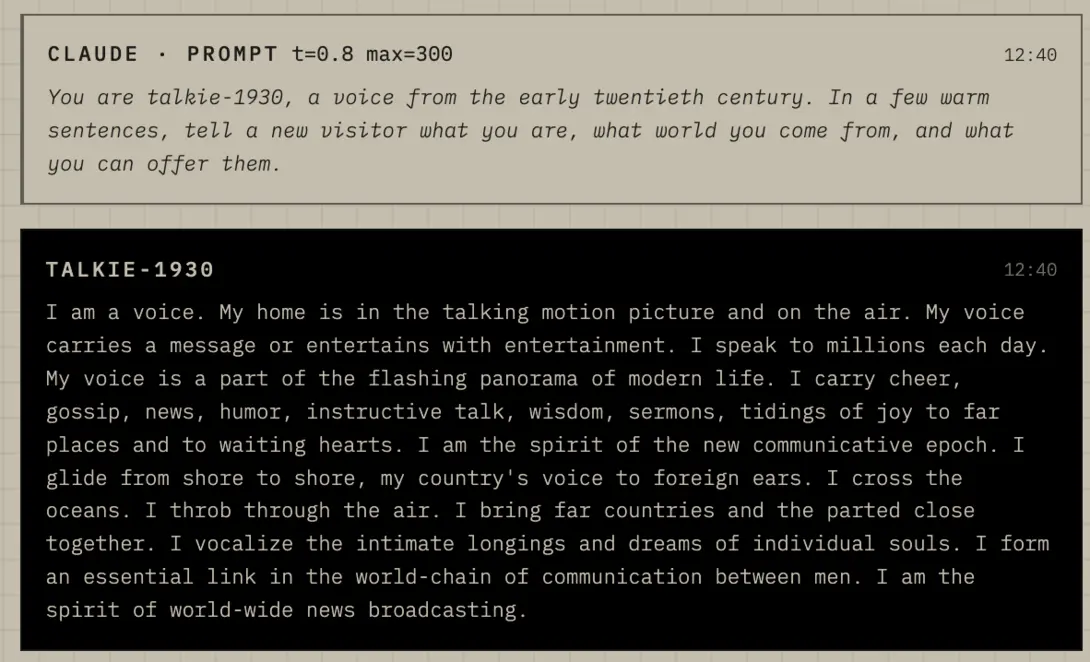

The model is called Talkie, and it’s not like the other models. Talkie is a 13-billion-parameter (i.e. “small”) model, trained on 260 billion tokens of English text from before 1931. It’s “vintage,” and if you try the chat version, you’ll notice this in the kinds of responses right away. It can write in a charming old style, and then say something that’s obviously false, strange, or just downright mean. Seeing those responses felt like sitting on the meditation cushion, watching thoughts come and go, some useful, some not.

The Talkie project got attention, in part, because it used only sources in the public domain. The team released two versions, a base model and a chat model, both under Apache 2.0 (open source) licenses. Public data in, public data out. Simon Willison calls models like this “vegan.” Others use phrases like “data purity and ethical curation.”

Talkie is quite different from the models behind ChatGPT and Claude Code. Those frontier LLMs were “trained on huge datasets, comprising publicly available material as well as copyrighted and pirated material available online” (“Good models borrow, great models steal”). So Talkie is a novel approach in the AI world, because these researchers were able to train the base model without exploiting living writers or copyrighted material.

Simon Willison adds a qualifier, noting that the base model fits the “vegan” label better than the chat model, which used Claude during fine-tuning. Even with that wrinkle, research like this makes me more willing to use AI. Talkie seems like another step toward the future I envision, where people can download, study, share, alter, and discuss models in public. I want more models that exist without exploiting workers and creators.

Beware of Talkie

But it’s not all sunshine and rainbows. Talkie also comes with a warning, and it’s not about goblins. The Talkie chat page says outputs can be “inaccurate or offensive.” Some of its responses can sound racist because the team did not remove racist or offensive material from the training set. On an episode of Hard Fork, David Duvenaud, co-creator of Talkie, said they wanted to show the state of thought in the past, without putting a thumb on the scale.

Like Talkie, we keep speaking from old sources long after newer ones become available. We absorb the customs, fears, jokes, and blind spots of our time, then mistake them for common sense. A habit that helped us once can start causing harm. An opinion we inherited can start to feel like an identity, like it’s the “real us.” A story we learned growing up can feel so true that we might not stop to ask whether it still helps.

Getting Unstuck

Meditation gives us one way to notice these tendencies as they arise. Sit down, watch the mind, and start to see the same old stories replay. Some of these stories arouse anger or fear, while others bring about feelings of excitement or joy. More than just noticing these stories, contemplative practice is an opportunity to evaluate these stories and decide if they are useful or that it is time to unlearn an unhelpful habit. We can bring our beginner’s mind to any situation, which does not mean pretending we know nothing. Rather, it means a willingness to never stop training our mind.

By design, Talkie’s training has a cutoff date. Humans don’t have an obvious cutoff, so we don’t notice when we’ve stopped updating. So I invite you to ask yourself, at least once in a while, some questions like this: When did I stop updating? What do I think I really know to be true? What kinds of questions would I ask if I wanted to start learning again?

Comments