GPT had a goblin problem. Yep, you read that right. The model kept mentioning goblins and other creatures in its responses, even when nobody asked. I felt amused the first time I read OpenAI’s explanation for “where the goblins came from” (OpenAI makes ChatGPT).

In response to its goblin problem, OpenAI – a company on the absolute cutting edge of technology – had to issue this instruction to its flagship model: “Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant.” That sentence will end up in future history books. In our time, the writers at Wired and Ars Technica had a field day.

I find the story funny because goblins are funny. But it also might sound familiar. These models get a nudge, find a groove, and keep going. My mind knows that trick. Few of us can just tell our minds, “do not think about goblins.” The mind, like the model, rebels.

The Hook in the Mind

I don’t need to believe an AI has desires to see how it can feed desire in people. The model generates text. People bring the hunger, the boredom, the curiosity, or the need for reassurance.

AI helps with learning, testing ideas, naming options, or getting unstuck from a tangled problem. But sometimes the mind gets hooked in a way that doesn’t feel like learning. We might ask one more version of the same question just to be sure. We want reassurance in a new form. The tool keeps responding, and it’s easy to mistake more words for more clarity.

The goblin may live in the machine, but the hook happens in the mind.

Hungry Ghosts

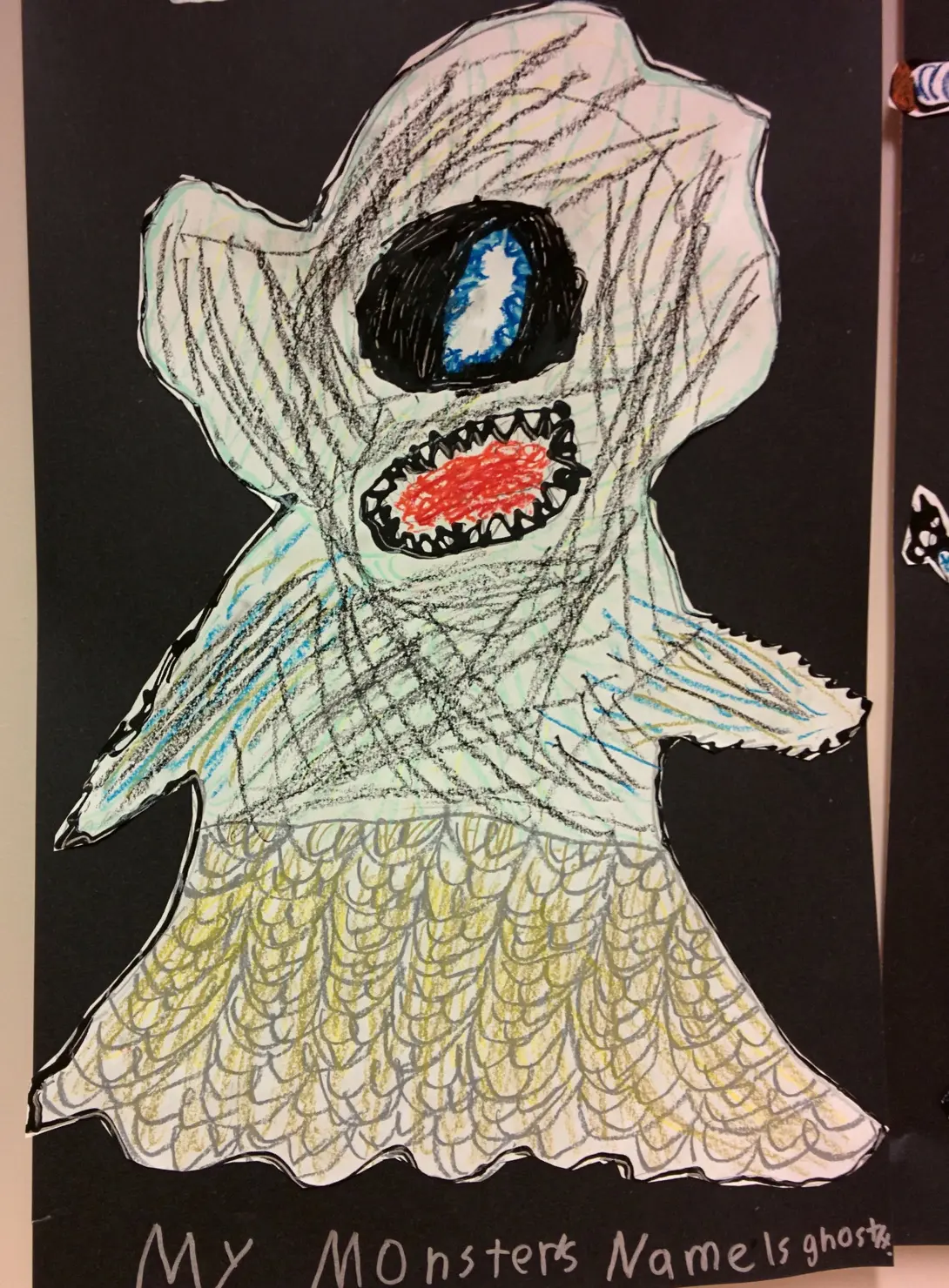

In Buddhist literature, goblins, along with hungry ghosts and demons, get grouped together, representing the parts of ourselves we’d rather not face. For instance, a hungry ghost is a being with a huge belly and a tiny mouth, condemned to a hunger that can’t feel fed. Lion’s Roar describes hungry ghosts as beings “tormented by desire that can never be sated.” That’s like refreshing the page hoping for more likes, or asking the AI one more time because the last answer didn’t satisfy.

OpenAI’s model isn’t a hungry ghost, but it can feed the hungry-ghost patterns we bring to it. When we arrive with a craving for certainty, the tool is willing to keep us company for hours. But company isn’t the same as help.

Guardrails Outside and In

The instruction OpenAI gave to its model is called a “guardrail.” These tools need guardrails because systems wander. So we write instructions, run tests, and set limits. I’m doing this kind of work now, re-building the AI search feature for the City of Boston. There’s a long list of subjects we never want to appear in a search result.

A tool used by millions of people needs people involved, asking what happens when something goes sideways. Design, testing, governance, and repair are examples of outer guardrails.

But outer guardrails can’t do the whole job. If someone wants information about a parking ticket, is it the tool’s job to help them feel certain? Maybe. Few people get a say in how a search engine or AI model responds. Yet, we can all train our own minds, and that’s amazing to me.

Contemplative practice helps us train the mind, not just to be calm or pure. It trains us to pause. It gives us a chance to feel the pull before acting. Yoga, meditation, and related contemplative practices bring us into the body and out of autopilot.

I’ve written before about how I use simple pop-ups on my screen to interrupt my outer routines on the laptop, offering reminders to go back inward. A reminder isn’t magic. It’s a small guardrail placed in time and location where I know I might swerve off the road into the rabbit hole. Or the ditch. For instance, I don’t want to rely on my best intentions at 9:30 p.m. when I’m feeling tired. The guardrail needs to be in place before I need it.

Guardrails do not need to be grand. Just practical ways to stop feeding the goblins for a moment.

Before the Next Prompt

So the next time you’re in the thick of a search for an answer and you get a flicker of wherewithal, see if you can hit pause for long enough to ask yourself one of these questions:

- What am I asking for?

- What feeling am I bringing to this?

- Will one good answer help, or am I looking for the answer that makes uncertainty disappear?

- What would make me stop?

- If this gets weird, will I notice?

These questions won’t solve every problem with AI. They won’t replace system prompts, safety work, or careful product design. They might help us avoid getting lost in the machine.

So the next time the goblins show up, resist feeding them for a moment and see what happens.

Comments